The financial commitment to artificial intelligence within the healthcare sector has reached an unprecedented scale, with global investments currently dwarfing the combined technological spending of the next four leading vertical industries combined. Despite this massive influx of capital, which has surged past $1.5 billion within the current calendar year, a sobering reality persists: many of these ambitious AI initiatives are failing to transition from experimental pilot programs into value-generating clinical assets. This stagnation is rarely the result of a deficiency in the sophistication of the machine learning algorithms themselves; instead, it is a direct consequence of the poor quality and structural inadequacy of the underlying legacy data systems upon which these models depend. When the foundation is fractured, even the most advanced predictive models become unreliable. The gap between theoretical potential and operational reality highlights a critical need for a total reassessment of data architecture to ensure that the massive investments currently being made do not result in a series of expensive, fragmented, and ultimately abandoned technology projects.

Recognizing Patterns of Operational Data Failure

One of the most prominent warning signs that an organization is not prepared for machine learning applications occurs when subject matter experts are forced into a cycle of manual verification. If clinical staff and administrative leaders find themselves spending more time auditing and correcting AI-generated errors than they would have spent performing the original task manually, the underlying data is fundamentally flawed. This creates a counterproductive loop where the artificial intelligence, rather than acting as a force multiplier for a busy workforce, becomes an additional administrative burden. In many cases, clinical decision support tools suffer from a phenomenon known as over-labeling, where the system identifies a vast majority of patients as high-risk. This usually occurs because the AI lacks the contextual nuance to distinguish between a historical medical condition that has been resolved and an active diagnosis. Consequently, the model functions logically based on its training, but the “noisy” nature of the input data leads to results that lack clinical utility.

The inability of a system to recognize temporal nuances represents a secondary but equally damaging symptom of data unreadiness within modern health networks. For instance, when a predictive algorithm attempts to analyze patient throughput, it may encounter conflicting timestamps across different departmental modules. One system might record an admission time based on the initial registration at the front desk, while another records it only when a physical bed assignment is completed in a specific ward. For a human administrator, these discrepancies are often manageable through intuition and experience, but for an AI, they represent insurmountable barriers to pattern recognition. When medication records further complicate this picture by intermingling generic names, brand names, and internal alphanumeric codes without a unified mapping standard, the algorithm loses its ability to draw accurate correlations. This semantic fragmentation ensures that any insights generated by the system remain superficial at best and dangerously misleading at worst, necessitating an immediate shift toward data uniformity.

Addressing the Structural Deficiencies of Legacy Architecture

The root cause of the current data crisis lies in the historical purpose for which most healthcare information systems were originally architected. Legacy frameworks, including Electronic Health Records and Customer Relationship Management systems, were designed as digital filing cabinets meant to facilitate transactional tasks like billing, compliance reporting, and sales tracking. They prioritize operational throughput and documentation over the high-fidelity data uniformity required for predictive modeling. Because these systems were never intended to serve as dynamic repositories for machine intelligence, they are naturally rife with inconsistencies that impede modern technological progress. For example, measurement ambiguity remains a persistent issue where temperature fields may mix Fahrenheit and Celsius readings without corresponding unit markers. While these minor errors were tolerable in an era of manual billing and human-led chart reviews, they are now the primary obstacles preventing the successful deployment of automated diagnostic tools and advanced population health analytics.

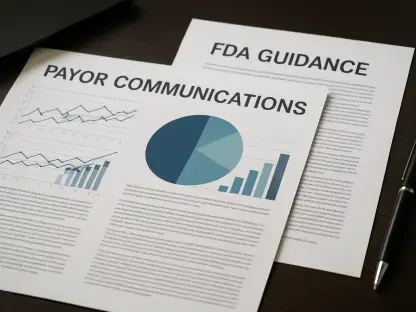

Bridging the gap between legacy storage and AI-ready infrastructure requires a rigorous commitment to data normalization that goes beyond simple technical cleaning. Current estimates suggest that less than 20% of enterprise healthcare data is currently in a state that can be utilized by advanced algorithms without extensive manual intervention. To resolve this, organizations must engage in deep terminology standardization, mapping free-text descriptions and inconsistent entries to recognized global standards such as ICD-10 or SNOMED codes. Furthermore, technical teams must reconcile duplicate patient records and fill in missing attributes that are essential for providing the clinical context the AI needs. While this technical remediation is a necessary reactive measure, it does not solve the long-term problem of how data is generated within the clinical environment. To truly modernize, healthcare leaders must look past the immediate symptoms of messy data and address the cultural and procedural habits that allow poor-quality information to enter the ecosystem in the first place.

Transitioning Toward Sustainable Data Governance Strategies

Sustainable success in the age of artificial intelligence requires a fundamental shift in how healthcare organizations manage information at the point of capture. To ensure that data remains a strategic asset rather than a liability, health systems must implement strict validation rules that prevent low-quality information from entering the system. This means moving away from a culture of workarounds, where clinicians and administrators rely on unofficial spreadsheets or informal knowledge to bypass the limitations of enterprise software. Instead, organizations should strive to return to a single, trusted source of truth where every piece of information is verified and standardized upon entry. Establishing clear roles for data stewardship is a critical component of this evolution, as it defines who owns and maintains the integrity of specific data sets. By demonstrating to frontline employees how their manual data entry directly impacts the performance of the AI tools meant to assist them, leaders can foster a deeper sense of accountability.

Failing to address these systemic issues carries significant operational risks that extend far beyond the failure of a few experimental technology projects. When data quality is poor, highly skilled analysts and data scientists spend upwards of 80% of their time on janitorial work—cleaning and prepping files—rather than performing the high-level analysis that could actually improve patient outcomes. This inefficiency leads to a state of analysis paralysis, where decision-makers are presented with conflicting reports from different departments and cannot determine which data set to trust. To break this cycle, executives should avoid the temptation of a complete, overnight enterprise overhaul. Instead, a more practical path forward involved targeting a single, high-stakes workflow, such as prior authorization or denial management. By resolving data inconsistencies within a specific, high-impact area first, organizations successfully proved the value of AI, established new standards for data quality, and created a scalable blueprint for broader institutional transformation.