James Maitland is a leading expert in robotics and IoT applications within the medical field, dedicated to pioneering digital solutions that enhance patient care. His work focuses on the intersection of human behavior and technology, specifically how advanced modeling can predict and improve healthcare outcomes. In this discussion, we explore the integration of behavioral modeling into enterprise-scale healthcare systems, the role of digital twins in reaching underserved communities, and the rigorous governance required to maintain safety and empathy in automated patient engagement.

To build digital agents that accurately mirror human behavior, how do you manage the scale of hundreds of thousands of individual profiles and millions of responses? What specific steps ensure these behavioral scenarios remain representative of the real-world complexity found in diverse populations?

The foundation of this approach relies on a massive, robust dataset that captures the nuances of real human life rather than just static demographic points. We manage an ecosystem built on 2.9 million consented responses from a group of more than 400,000 individuals, which allows us to move beyond simple data points and into the realm of personality and habit. These digital agents are trained across more than 200 behavioral scenarios, ensuring that when we simulate a response, it reflects a person’s actual diet, lifestyle, and past health choices. To keep it representative, we conduct moderated interviews with participants to collect qualitative depth that traditional surveys often miss. This scale ensures that the AI versions act as accurate stand-ins for a wide spectrum of people, providing a mirror to the real-world complexity of the millions of customers we serve every day.

Underserved and hard-to-reach populations often face unique barriers to healthcare access. How do agentic twins help bridge the gap in gathering insights from these specific groups, and what workflow adjustments have been made based on the feedback these digital representatives provide?

Reaching underserved populations is a perennial challenge because traditional research often relies on people having the time and resources to participate in lengthy studies. Agentic twins bridge this gap by allowing us to maintain a “living” representation of these communities within our development environment, meaning their voices are present even when they aren’t physically in the room. This process allows us to test workflows and messaging in real-time to see where a specific community might find a barrier, such as a lack of transportation or a misunderstanding of digital health jargon. Based on the feedback from these digital representatives, we have adjusted our workflows to be more inclusive, ensuring that our services are not just built for the average user, but for those who struggle most with access. This ongoing representation means that instead of a one-time check-in, the consumer is with us throughout the entire design and implementation lifecycle.

Identifying barriers like pharmacy trust and refill convenience is essential for improving medication adherence. How do digital twins pinpoint these specific pain points, and can you walk through the process of turning an insight about refill stress into a functional digital tool?

The digital twins allow us to simulate the entire patient journey and identify exactly where the “friction” occurs that leads to a patient dropping their treatment. By using this technology, our team significantly reduced the research time necessary to identify that trust in medication handling and the sheer inconvenience of refills were the primary deterrents. When the digital agents flagged “refill stress” as a major pain point, we broke it down step-by-step: first, we identified that for some, the mere thought of a complex refill process prevented them from even starting a medication. We then translated this into a functional requirement for our developers to build simplified, one-touch digital refill tools. This direct line from a simulated emotional barrier to a specific software feature ensures that our digital tools are solving real human anxieties rather than just offering generic technical fixes.

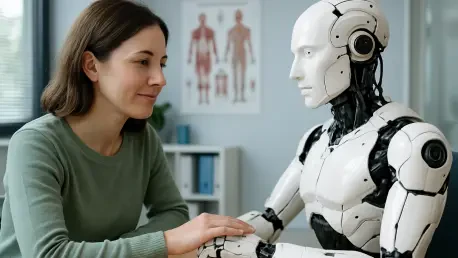

Consumers often express a need for a stronger connection to their pharmacists and clinicians during their treatment. How does your development team balance the efficiency of automated tools with the human element of care, and what indicators suggest these digital platforms actually improve patient comfort and retention?

Maintaining the human element in a digital-first world is a delicate balance, but we’ve found that technology should serve as a bridge to the clinician, not a wall. Our research with digital twins revealed that patients feel much more comfortable when they have a visible connection to their pharmacists, so we design our tools to emphasize that professional link. The efficiency of the automation handles the administrative burden—like scheduling or basic reminders—which actually frees up the human experts to provide the high-value care patients crave. Indicators of success are seen in the increased willingness of patients to start a treatment plan when they feel supported by these easier digital tools. When a patient feels that the digital interface is an extension of their local pharmacy team, we see higher rates of retention and a more sustained commitment to their long-term health goals.

AI outputs require rigorous oversight to ensure safety, fairness, and a consistent tone. What specific governance frameworks are used to monitor these digital agents, and how do you handle “out of the ordinary” feedback that might contradict traditional consumer research?

We operate under a strict governance framework that prioritizes tone, fairness, and safety at every stage of the AI’s interaction. This framework is essential because, while AI is a powerful accelerator, it is not a replacement for human judgment and ethical oversight. If a digital agent provides feedback that seems “out of the ordinary” or contradicts established data, we don’t just dismiss it; we use it as a trigger for deeper investigation. This might involve engaging with real consumers in a more traditional, moderated setting to validate whether we have uncovered a new behavioral trend or if the AI has developed a bias. By treating “strange” data as an opportunity for further research rather than an error, we ensure that our digital agents remain grounded in reality while maintaining the core pillar of consumer-centricity.

What is your forecast for the future of agentic twins in the healthcare industry?

I believe that within the next decade, agentic twins will become the standard requirement for any healthcare organization looking to launch a new product or service. We are moving toward a future where “patient-centricity” is no longer a buzzword but a data-driven reality, where we can predict a community’s reaction to a new health initiative with incredible precision before a single dollar is spent on a rollout. These twins will evolve from being feedback tools into proactive health advocates that help us customize care at a hyper-individualized level. Eventually, this will lead to a more equitable healthcare system where the digital voices of the underserved are just as loud and influential as anyone else’s, fundamentally changing how we design for human wellness.