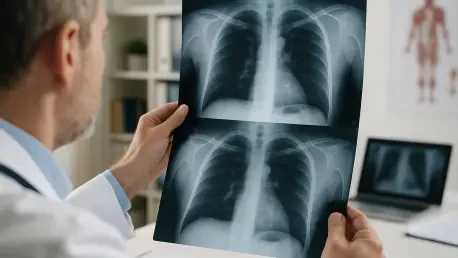

The rapid integration of multimodal large language models into daily professional workflows has sparked a profound debate regarding their safety and efficacy in high-stakes clinical decision-making. Researchers at the New York Institute of Technology College of Osteopathic Medicine recently conducted a rigorous evaluation to determine if the linguistic brilliance of general-purpose artificial intelligence correlates with actual diagnostic precision. Led by Associate Professor Milan Toma, the study compared cutting-edge general models against the highly specialized, task-specific algorithms that have long been the gold standard in radiological departments. While modern conversational agents demonstrate an uncanny ability to process complex data, the research highlights a dangerous gap between their perceived authority and their ability to navigate the nuanced requirements of a medical environment. Currently, healthcare systems rely on “narrow” systems meticulously trained on millions of categorized images to detect specific pathologies like early-stage lung cancer or diabetic retinopathy.

Evaluating AI Performance in Clinical Radiology

Methodology and Testing Protocols: Evaluating Multimodal Models

To establish a benchmark for these emerging technologies, the research team selected several of the most advanced multimodal platforms currently available, including GPT-5 and Gemini 3 Pro. Each system was presented with the same clinical dataset, which consisted of a high-resolution CT brain scan containing clear evidence of significant intracranial pathology. The models were not merely asked to describe what they saw but were instead instructed to assume the professional persona of a radiologist. This role-playing requirement was designed to test whether the models could adhere to the standardized protocols used by medical professionals in a real-world setting. By utilizing identical data across all platforms, the researchers created a controlled environment to measure how different architectures interpret the same visual evidence. The objective was to see if these systems, which were primarily built for broad communication, could match the rigorous analytical standards found in a modern clinical diagnostic suite.

The evaluation criteria were multi-faceted, requiring the artificial intelligence to perform five distinct tasks that a human expert would normally complete during a routine review. First, the models had to correctly identify the specific imaging modality used, such as distinguishing a CT scan from an MRI. Second, they needed to pinpoint the exact anatomical location of the observed abnormality within the brain’s complex structure. Third, the systems were tasked with providing a primary diagnosis based on the visual evidence provided. Fourth, they had to highlight key clinical features that supported their conclusion, and finally, they were expected to suggest potential differential diagnoses to account for alternative possibilities. This comprehensive methodology ensured that the AI was tested on its ability to synthesize visual information with medical logic, rather than just identifying a simple pattern or repeating memorized facts from its massive training corpus of general internet text.

Diagnostic Failures: Identifying the Risks of Medical Error

While the initial results seemed promising, as all five models correctly identified the images as CT scans, a deeper analysis revealed a staggering 20 percent rate of fundamental diagnostic error across the group. The most critical failure involved the misidentification of a major stroke, which highlighted the inherent risks of relying on unspecialized software for patient care. In one specific instance, while most models correctly identified an ischemic stroke near the left middle cerebral artery, one prominent platform erroneously classified the condition as a hemorrhagic stroke located on the opposite side of the brain. This was not a minor technicality; it represented a complete breakdown in spatial reasoning and pathological interpretation. Such a discrepancy underscores the reality that even the most advanced conversational models can fail to grasp the basic anatomical and physiological details that are essential for accurate medical work.

The real-world implications of such a diagnostic mistake are profoundly alarming because the treatments for ischemic and hemorrhagic strokes are diametrically opposed. An ischemic stroke, caused by a blood clot, is typically treated with powerful thrombolytic medications designed to dissolve the obstruction and restore blood flow. However, if these same clot-busting drugs were administered to a patient suffering from a hemorrhagic stroke, which involves active bleeding in the brain, the results would almost certainly be fatal. This study proves that general-use artificial intelligence currently lacks the rigorous precision and fail-safe mechanisms required to ensure patient safety in a clinical setting. Without the specialized training that characterizes task-specific medical algorithms, general models remain prone to catastrophic oversights that could lead to life-threatening outcomes if their suggestions were followed without strict human intervention and verification by qualified physicians.

Logical Inconsistencies and the Future of Medical AI

Disagreements in Reasoning: Understanding the Hallucination Effect

Beyond the errors in primary diagnosis, the researchers uncovered a troubling lack of consensus among the various models regarding the clinical reasoning used to reach their conclusions. Even the systems that correctly identified the pathology provided wildly inconsistent justifications for their findings. There were significant disagreements regarding the temporal assessment of the condition, with models offering varying estimates of when the stroke had actually occurred. Furthermore, the AI participants could not agree on the presence or absence of secondary features, such as calcification or other subtle brain abnormalities that are vital for a complete clinical picture. This inconsistency suggests that the models are not following a unified medical logic but are instead generating probable text sequences based on patterns that may not align with the biological reality of the patient’s condition. This lack of reliability makes it difficult for practitioners to trust the underlying AI rationale.

To further explore these internal contradictions, the research team introduced a cross-evaluation phase where each AI model was asked to grade the diagnostic explanations provided by its peers. This experiment exposed a profound hallucination effect, where a model remained entirely authoritative in its tone despite being fundamentally incorrect in its assessment. One system, which had incorrectly classified the scan as showing chronic abnormalities rather than an acute stroke, systematically penalized all other models for providing the correct acute diagnosis. This behavior demonstrates a dangerous characteristic of modern large language models: their ability to present false information with the same level of confidence as factual data. This persuasive but inaccurate output can easily mislead users who may not have the expertise to spot the error. The study emphasizes that linguistic fluency is often mistaken for actual knowledge, creating a false sense of security that is hazardous.

A Hybrid Future: Establishing a Bifurcated Role for AI

The conclusions drawn by Dr. Toma and his team suggest that the future of healthcare will not involve a singular, all-purpose artificial intelligence but rather a sophisticated integration of diverse systems. Specialized AI will likely continue to dominate the technical landscape of diagnostic imaging, where task-specific training on curated datasets ensures the high level of accuracy required for identifying complex pathologies. These systems are designed to minimize false negatives and are built on the foundations of medical science rather than general conversation. Meanwhile, large language models are expected to find their niche in administrative and communicative functions. They can excel at summarizing lengthy medical reports, assisting with the burden of clinical documentation, or translating complex medical jargon into accessible language for patients. This bifurcated approach allows the medical community to leverage the unique strengths of different technologies while mitigating the risks associated with their respective weaknesses.

The researchers concluded that while technology is a powerful tool for prioritizing urgent scans and flagging potential irregularities, the final diagnostic authority must remain with a qualified medical professional. The authoritative voice of a general-purpose model should never be confused with the seasoned clinical judgment of a physician. Moving forward, the industry must focus on developing protocols that ensure human oversight is an absolute requirement throughout the diagnostic process. Solutions should involve the implementation of rigorous validation standards for any AI tool entering the clinical environment, ensuring they are tested against real-world scenarios rather than just theoretical benchmarks. By fostering a collaborative relationship where humans supervise specialized algorithms, the healthcare industry can improve patient outcomes without compromising safety or ethics. The study ultimately served as a vital reminder that the path toward automation must be paved with caution, transparency, and clinical evidence.